Inspiration comes from https://github.com/jcjohnson/neural-style.

Because installing all the required toolchains on OS X 10.11.3 is a bit challenging, I here are my installation steps.

cd workspace/

git clone git@github.com:jcjohnson/neural-style.git

git clone https://github.com/torch/distro.git ~/torch --recursive

cd ~/torch; bash install-deps;

This will fail a few times if you have already installed them (but different versions). I needed fiddle around unlinking things.

brew unlink qt

brew linkapps qt

brew link --overwrite wget

bash install-deps;

brew unlink cmake

bash install-deps;

brew unlink imagemagick

brew unlink brew-cask

bash install-deps;

Anyhow, make sure the install-deps script doesn’t error out, otherwise you’ll be missing dependencies.

./install.sh

This succeeds. It tells you to activate, but I’m using non-standard shell (fish shell), so I mess with the fish config.

. /Users/jason/torch/install/bin/torch-activate

th #checking this exists in path, and it doesn't

luarocks install image

source ~/.profile

. ~/.profile

vim ~/.bashrc

subl /Users/jason/torch/install/bin/torch-activate

subl ~/.config/fish/config.fish

th #now it exists

The change into fish.config that was necessary (for my user, my paths) was:

# . /Users/jason/torch/install/bin/torch-activate

set LUA_PATH '/Users/jason/.luarocks/share/lua/5.1/?.lua;/Users/jason/.luarocks/share/lua/5.1/?/init.lua;/Users/jason/torch/install/share/lua/5.1/?.lua;/Users/jason/torch/install/share/lua/5.1/?/init.lua;./?.lua;/Users/jason/torch/install/share/luajit-2.1.0-beta1/?.lua;/usr/local/share/lua/5.1/?.lua;/usr/local/share/lua/5.1/?/init.lua'

set LUA_CPATH '/Users/jason/.luarocks/lib/lua/5.1/?.so;/Users/jason/torch/install/lib/lua/5.1/?.so;./?.so;/usr/local/lib/lua/5.1/?.so;/usr/local/lib/lua/5.1/loadall.so'

set PATH /Users/jason/torch/install/bin $PATH

set LD_LIBRARY_PATH /Users/jason/torch/install/lib $LD_LIBRARY_PATH

set DYLD_LIBRARY_PATH /Users/jason/torch/install/lib $DYLD_LIBRARY_PATH

set LUA_CPATH '/Users/jason/torch/install/lib/?.dylib;'$LUA_CPATH

Convert the bash syntax to fish syntax by replacing “export” with “set” and “:” with ” “.

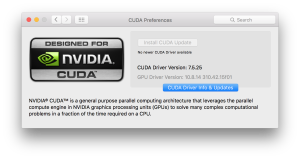

I have a Nvidia graphics card, so I download and install CUDA.

Then we can continue installing dependencies.

brew install protobuf

luarocks install loadcaffe

luarocks install torch

luarocks install nn

I found that having Xcode 7 means the clang compiler is too new and not supported by cutorch and cunn. The error you would see is this:

nvcc fatal : The version ('70002') of the host compiler ('Apple clang') is not supported

Sometimes the error messages are garbled. Concurrency build, I presume. I downloaded Xcode 6.4, and replaced my Xcode 7:

cd /Applications

sudo mv Xcode.app/ Xcode7.app

sudo mv Xcode\ 2.app/ Xcode.app # this is Xcode 6.4 when you install it

sudo xcode-select -s /Applications/Xcode.app/Contents/Developer

clang -v

#Apple LLVM version 6.1.0 (clang-602.0.53) (based on LLVM 3.6.0svn)

#Target: x86_64-apple-darwin15.3.0

#Thread model: posix

But now I get another fatal issue:

/usr/local/cuda/include/common_functions.h:65:10: fatal error: 'string.h' file not found

#include

Seems like this is an issue people have, cutorch issue 241. Can be resolved by doing

xcode-select --install

This gets me a little further, now the issue seems related to torch.

make[2]: *** No rule to make target `/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.11.sdk/System/Library/Frameworks/Accelerate.framework', needed by `lib/THC/libTHC.dylib'. Stop.

make[2]: *** Waiting for unfinished jobs....

[ 64%] Building C object lib/THC/CMakeFiles/THC.dir/THCGeneral.c.o

[ 69%] Building C object lib/THC/CMakeFiles/THC.dir/THCAllocator.c.o

[ 71%] Building C object lib/THC/CMakeFiles/THC.dir/THCStorage.c.o

[ 76%] Building C object lib/THC/CMakeFiles/THC.dir/THCTensorCopy.c.o

[ 76%] Building C object lib/THC/CMakeFiles/THC.dir/THCStorageCopy.c.o

[ 76%] Building C object lib/THC/CMakeFiles/THC.dir/THCTensor.c.o

/tmp/luarocks_cutorch-scm-1-3748/cutorch/lib/THC/THCGeneral.c:633:7: warning: absolute value function 'abs' given an

argument of type 'long' but has parameter of type 'int' which may cause truncation of value [-Wabsolute-value]

if (abs(state->heapDelta) < heapMaxDelta) { ^ /tmp/luarocks_cutorch-scm-1-3748/cutorch/lib/THC/THCGeneral.c:633:7: note: use function 'labs' instead if (abs(state->heapDelta) < heapMaxDelta) {

^~~

labs

1 warning generated.

make[1]: *** [lib/THC/CMakeFiles/THC.dir/all] Error 2

make: *** [all] Error 2

Error: Build error: Failed building.

Since I just updated to OS X 10.11, I presume frameworks in 10.10 should be ok. So this hack should be ok as well, to make Accelerate.framework appear.

ln -s "/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.10.sdk" "/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.11.sdk"

Finally I try to install cutorch, with success.

luarocks install cutorch

luarocks install cunn #this installs fine as well, it didn't before

Everything should be good to go. But nothing works smooth.

jason@jmbp15-nvidia ~/w/neural-style (master)>

th neural_style.lua -style_image IMG_2663.JPG -content_image IMG_2911.JPG

[libprotobuf WARNING google/protobuf/io/coded_stream.cc:537] Reading dangerously large protocol message. If the message turns out to be larger than 1073741824 bytes, parsing will be halted for security reasons. To increase the limit (or to disable these warnings), see CodedInputStream::SetTotalBytesLimit() in google/protobuf/io/coded_stream.h.

[libprotobuf WARNING google/protobuf/io/coded_stream.cc:78] The total number of bytes read was 574671192

Successfully loaded models/VGG_ILSVRC_19_layers.caffemodel

conv1_1: 64 3 3 3

conv1_2: 64 64 3 3

conv2_1: 128 64 3 3

conv2_2: 128 128 3 3

conv3_1: 256 128 3 3

conv3_2: 256 256 3 3

conv3_3: 256 256 3 3

conv3_4: 256 256 3 3

conv4_1: 512 256 3 3

conv4_2: 512 512 3 3

conv4_3: 512 512 3 3

conv4_4: 512 512 3 3

conv5_1: 512 512 3 3

conv5_2: 512 512 3 3

conv5_3: 512 512 3 3

conv5_4: 512 512 3 3

fc6: 1 1 25088 4096

fc7: 1 1 4096 4096

fc8: 1 1 4096 1000

THCudaCheck FAIL file=/tmp/luarocks_cutorch-scm-1-5715/cutorch/lib/THC/generic/THCStorage.cu line=40 error=2 : out of memory

/Users/jason/torch/install/bin/luajit: /Users/jason/torch/install/share/lua/5.1/nn/utils.lua:11: cuda runtime error (2) : out of memory at /tmp/luarocks_cutorch-scm-1-5715/cutorch/lib/THC/generic/THCStorage.cu:40

stack traceback:

[C]: in function 'resize'

/Users/jason/torch/install/share/lua/5.1/nn/utils.lua:11: in function 'torch_Storage_type'

/Users/jason/torch/install/share/lua/5.1/nn/utils.lua:57: in function 'recursiveType'

/Users/jason/torch/install/share/lua/5.1/nn/Module.lua:123: in function 'type'

/Users/jason/torch/install/share/lua/5.1/nn/utils.lua:45: in function 'recursiveType'

/Users/jason/torch/install/share/lua/5.1/nn/utils.lua:41: in function 'recursiveType'

/Users/jason/torch/install/share/lua/5.1/nn/Module.lua:123: in function 'cuda'

neural_style.lua:76: in function 'main'

neural_style.lua:500: in main chunk

[C]: in function 'dofile'

...ason/torch/install/lib/luarocks/rocks/trepl/scm-1/bin/th:145: in main chunk

[C]: at 0x0109a0dd50

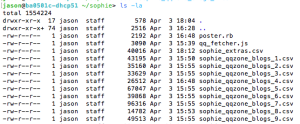

I guess I ran out of GPU memory? Seems to be an issue here https://github.com/jcjohnson/neural-style/issues/150. My pictures aren’t that small, I guess. I’ll just resize them.

jason@jmbp15-nvidia ~/w/neural-style (master)> ls -lh

total 5480

-rw-r--r--@ 1 jason staff 2.4M 11 Mar 21:39 IMG_2663.JPG

-rw-r--r--@ 1 jason staff 235K 11 Mar 21:38 IMG_2911.JPG

-rw-r--r-- 1 jason staff 9.1K 11 Mar 20:34 INSTALL.md

-rw-r--r-- 1 jason staff 1.1K 11 Mar 20:34 LICENSE

-rw-r--r-- 1 jason staff 16K 11 Mar 20:34 README.md

drwxr-xr-x 4 jason staff 136B 11 Mar 20:34 examples

drwxr-xr-x 8 jason staff 272B 12 Mar 06:24 models

-rw-r--r-- 1 jason staff 16K 11 Mar 20:34 neural_style.lua

jason@jmbp15-nvidia ~/w/neural-style (master)> sips IMG_2663.JPG -Z 680

/Users/jason/workspace/neural-style/IMG_2663.JPG

[ (kCGColorSpaceDeviceRGB)] ( 0 0 0 1 )

/Users/jason/workspace/neural-style/IMG_2663.JPG

jason@jmbp15-nvidia ~/w/neural-style (master)> sips IMG_2911.JPG -Z 680

/Users/jason/workspace/neural-style/IMG_2911.JPG

[ (kCGColorSpaceDeviceRGB)] ( 0 0 0 1 )

/Users/jason/workspace/neural-style/IMG_2911.JPG

jason@jmbp15-nvidia ~/w/neural-style (master)> ls -lh

total 2224

-rw-r--r-- 1 jason staff 105K 12 Mar 06:27 IMG_2663.JPG

-rw-r--r-- 1 jason staff 64K 12 Mar 06:28 IMG_2911.JPG

-rw-r--r-- 1 jason staff 9.1K 11 Mar 20:34 INSTALL.md

-rw-r--r-- 1 jason staff 1.1K 11 Mar 20:34 LICENSE

-rw-r--r-- 1 jason staff 16K 11 Mar 20:34 README.md

drwxr-xr-x 4 jason staff 136B 11 Mar 20:34 examples

drwxr-xr-x 8 jason staff 272B 12 Mar 06:24 models

-rw-r--r-- 1 jason staff 16K 11 Mar 20:34 neural_style.lua

jason@jmbp15-nvidia ~/w/neural-style (master)>

Well resizing didn’t work, still runs out of memory. Default `th` uses nn, so I will try using cudnn, but not working.

jason@jmbp15-nvidia ~/w/neural-style (master)>

th neural_style.lua -style_image IMG_2663.JPG -content_image IMG_2911.JPG -backend cudnn

nil

/Users/jason/torch/install/bin/luajit: /Users/jason/torch/install/share/lua/5.1/trepl/init.lua:384: /Users/jason/torch/install/share/lua/5.1/trepl/init.lua:384: /Users/jason/torch/install/share/lua/5.1/cudnn/ffi.lua:1279: 'libcudnn (R4) not found in library path.

Please install CuDNN from https://developer.nvidia.com/cuDNN

Then make sure files named as libcudnn.so.4 or libcudnn.4.dylib are placed in your library load path (for example /usr/local/lib , or manually add a path to LD_LIBRARY_PATH)

stack traceback:

[C]: in function 'error'

/Users/jason/torch/install/share/lua/5.1/trepl/init.lua:384: in function 'require'

neural_style.lua:64: in function 'main'

neural_style.lua:500: in main chunk

[C]: in function 'dofile'

...ason/torch/install/lib/luarocks/rocks/trepl/scm-1/bin/th:145: in main chunk

[C]: at 0x0106769d50

So I go to https://developer.nvidia.com/rdp/cudnn-download and download cudnn-7.0-osx-x64-v4.0-prod.tgz and follow their install guide:

PREREQUISITES

CUDA 7.0 and a GPU of compute capability 3.0 or higher are required.

ALL PLATFORMS

Extract the cuDNN archive to a directory of your choice, referred to below as .

Then follow the platform-specific instructions as follows.

LINUX

cd

export LD_LIBRARY_PATH=`pwd`:$LD_LIBRARY_PATH

Add to your build and link process by adding -I to your compile

line and -L -lcudnn to your link line.

OS X

cd

export DYLD_LIBRARY_PATH=`pwd`:$DYLD_LIBRARY_PATH

Add to your build and link process by adding -I to your compile

line and -L -lcudnn to your link line.

WINDOWS

Add to the PATH environment variable.

In your Visual Studio project properties, add to the Include Directories

and Library Directories lists and add cudnn.lib to Linker->Input->Additional Dependencies.

Opening the tgz file gives me cuda folder. I just need to add this my path

jason@jmbp15-nvidia ~/workspace> cd cuda/

jason@jmbp15-nvidia ~/w/cuda> set DYLD_LIBRARY_PATH (pwd) $DYLD_LIBRARY_PATH

jason@jmbp15-nvidia ~/w/cuda> echo $DYLD_LIBRARY_PATH

/Users/jason/workspace/cuda /Users/jason/torch/install/lib

jason@jmbp15-nvidia ~/w/cuda [127]> tree

.

├── cd

├── include

│ └── cudnn.h

└── lib

├── libcudnn.4.dylib

├── libcudnn.dylib -> libcudnn.4.dylib

└── libcudnn_static.a

2 directories, 5 files

jason@jmbp15-nvidia ~/w/cuda> set LD_LIBRARY_PATH (pwd)/lib/ $LD_LIBRARY_PATH

jason@jmbp15-nvidia ~/w/cuda> echo $LD_LIBRARY_PATH

/Users/jason/workspace/cuda/lib/ /Users/jason/torch/install/lib