Let’s say we have our own dns server (e.g. with dnsmasq) 10.7.0.1 – and we have some internal domains, such as something ending in sf that we aim to resolve at that machine, we can edit vi /etc/systemd/resolved.conf to add

DNS=8.8.8.8

FallbackDNS=10.7.0.1

This way we can resolve by default with 8.8.8.8 and use the fallback if it cannot resolve. After modifying this file, systemctl restart systemd-resolved to restart the service.

root@host # resolvectl

Global

Protocols: -LLMNR -mDNS -DNSOverTLS DNSSEC=no/unsupported

resolv.conf mode: foreign

DNS Servers: 1.1.1.1 8.8.8.8

Fallback DNS Servers: 10.7.0.1

Also

resolvectl dns interface 10.7.0.1

resolvectl domain interface ds

to add the dns for your vpn device. Where interface is the vpn interface such as wireguard. E.g.

root@henderson /h/jason# resolvectl

Global

Protocols: -LLMNR -mDNS -DNSOverTLS DNSSEC=no/unsupported

resolv.conf mode: foreign

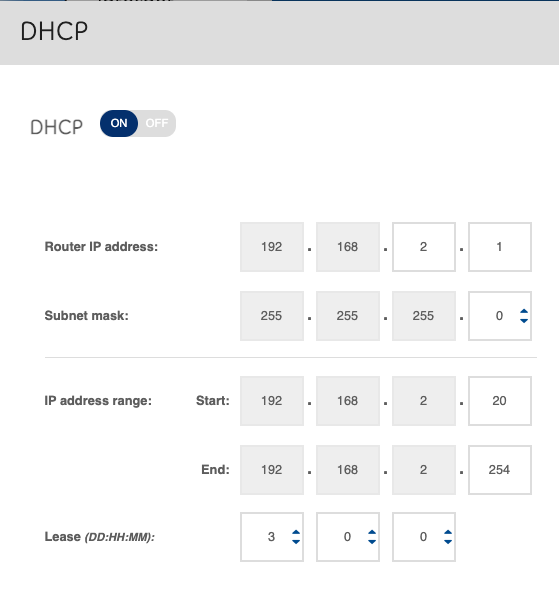

Current DNS Server: 192.168.2.1

DNS Servers: 192.168.2.1

Fallback DNS Servers: 10.7.0.1

[...omitted...]

Link 8 (interface)

Current Scopes: DNS

Protocols: +DefaultRoute +LLMNR -mDNS -DNSOverTLS DNSSEC=no/unsupported

DNS Servers: 10.7.0.1

DNS Domain: ds

Now you can ping something that resolves internal corporate VPN only, such as

root@host # ping google.com

PING google.com (172.217.13.142) 56(84) bytes of data.

64 bytes from yul02s05-in-f14.1e100.net (172.217.13.142): icmp_seq=1 ttl=117 time=14.0 ms

root@host # ping glassbox.ds

PING glassbox.ds (10.7.0.1) 56(84) bytes of data.

64 bytes from 10.7.0.1 (10.7.0.1): icmp_seq=1 ttl=64 time=84.3 ms

This shows that the internal address works as well as public address. Note that the google DNS resolves to the closest server.

Be aware

In vi /etc/resolv.conf

This file may override your config. Verify that nameserver 127.0.0.53 is listed in this file.

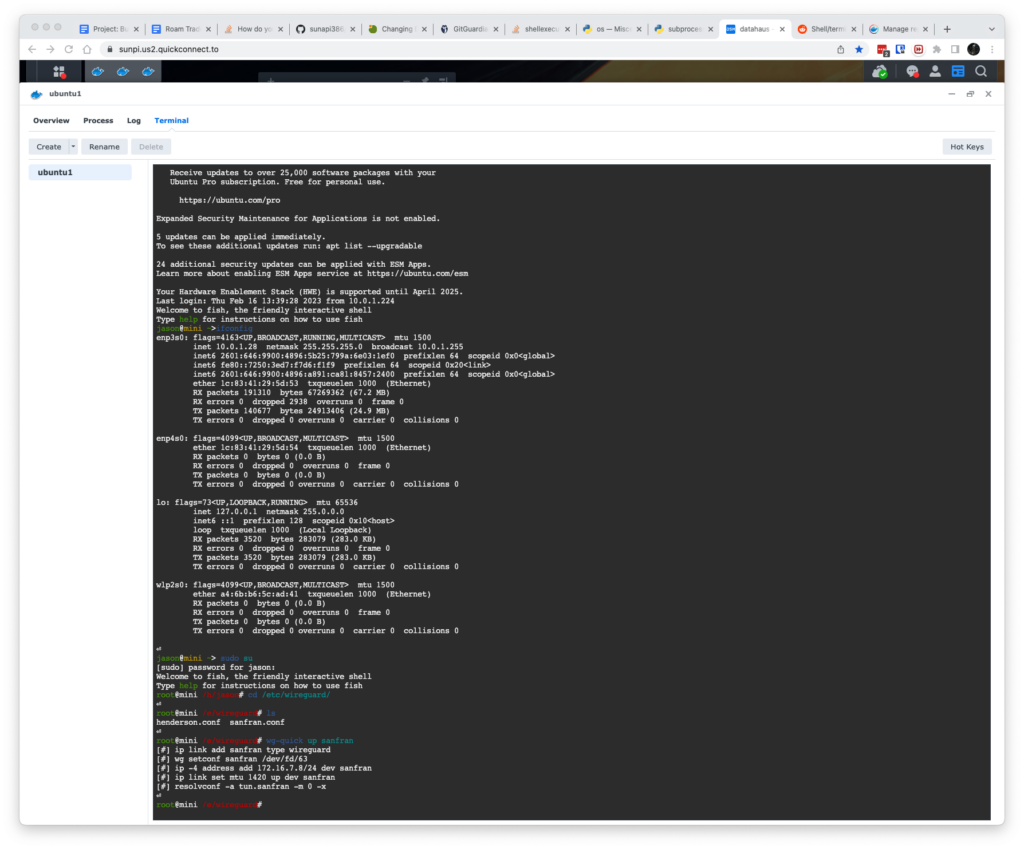

Sometimes after reboot I find this file has been modified. I am still investigating the cause. Edit: ah found the cause. https://rakhesh.com/linux-bsd/wireguard-search-domain/ This seems to indicate Wireguard config file can specify the DNS search domains. From the man-page:

DNS — a comma-separated list of IP (v4 or v6) addresses to be set as the interface’s DNS servers, or non-IP hostnames to be set as the interface’s DNS search domains. May be specified multiple times. Upon bringing the interface up, this runs ‘resolvconf -a tun.INTERFACE -m 0 -x‘ and upon bringing it down, this runs ‘resolvconf -d tun.INTERFACE‘. If these particular invocations of resolvconf(8) are undesirable, the PostUp and PostDown keys below may be used instead.

Thus you could have the following line:

DNS = 10.7.0.1, ds

And it is wireguard that is modifying /etc/resolv.conf

After modifying this line,

root@host # cat /etc/resolv.conf

# Dynamic resolv.conf(5) file for glibc resolver(3) generated by resolvconf(8)

# DO NOT EDIT THIS FILE BY HAND -- YOUR CHANGES WILL BE OVERWRITTEN

# 127.0.0.53 is the systemd-resolved stub resolver.

# run "systemd-resolve --status" to see details about the actual nameservers.

nameserver 10.7.0.1

nameserver 127.0.0.53

search ds home

Persist

Remember this?

resolvectl dns interface 10.7.0.1

resolvectl domain interface ds

In order to persist this, it seems we need to make the .network file and specify the domain to resolve there. Then I want to make my main interface resolve the rest of the domains.

enp4s0.network interface.network

root@henderson /e/s/network# pwd

/etc/systemd/network

root@henderson /e/s/network# cat interface.network

[Match]

Name=interface

[Network]

DNS=10.7.0.1

Domains=ds

root@henderson /e/s/network# cat enp4s0.network

[Match]

Name=enp4s0

[Network]

DNS=192.168.2.1